Waymo, Alphabet’s self-driving technology unit and a subsidiary of Google, is actively working to integrate Google’s Gemini artificial intelligence into its robotaxi fleet. This initiative reflects the company’s ongoing efforts to enhance autonomous vehicle functionality and passenger experience using cutting-edge AI capabilities.

Gemini as an In-Car AI Assistant

Recent code analysis by app researcher Jane Manchun Wong suggests Waymo is testing Gemini as an in-car AI companion designed to interact with passengers during rides. While the functionality has not yet been officially released or enabled for public use, internal prompts discovered in the Waymo app indicate that the system would go beyond basic chat capabilities.

According to the leaked internal specification, the Gemini-powered assistant would:

- Answer rider questions about general topics.

- Manage select cabin controls such as climate, lighting, and music.

- Provide reassurance and assistance to passengers during the ride.

The AI companion is instructed to use clear, simple language and keep responses concise, typically limited to one to three sentences. When activated via the in-car interface, Gemini could even greet passengers by name and tailor interactions based on contextual information-such as the number of previous Waymo rides the user has taken.

Importantly, the Gemini assistant would not interfere with the vehicle’s navigation or autonomous driving systems. It is explicitly directed to distinguish its role from the Waymo Driver (the autonomous driving stack) and avoid attempting functions such as route changes or real-world actions like ordering food.

How Waymo Uses AI and Advanced Technology

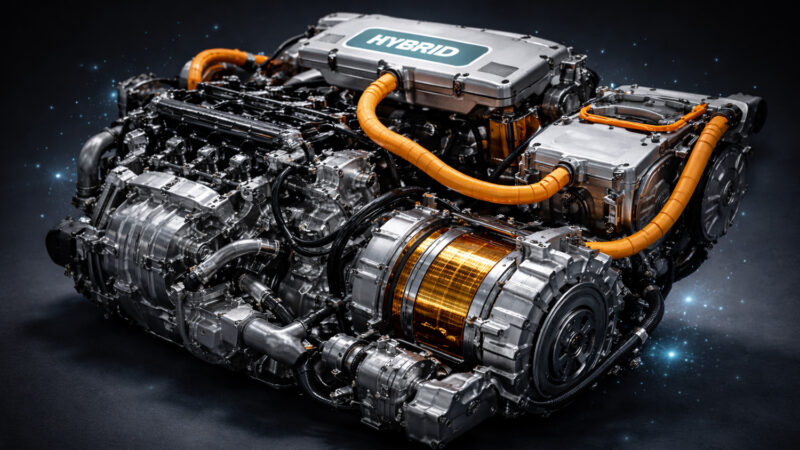

Beyond the in-car assistant concept, Waymo’s autonomous vehicles are built on a complex suite of technologies to perceive and navigate real-world environments. The company’s self-driving system – known internally as the Waymo Driver – relies on a combination of:

- LiDAR, radar, and cameras to sense surrounding objects and conditions.

- Machine learning models trained on massive driving data sets to interpret and respond to dynamic traffic scenarios.

- Simulation-based testing that allows software to be validated across millions of virtual miles before deployment.

In recent developments, Waymo has leaned on Google’s Tensor Processing Units (TPUs) and the JAX machine-learning framework – both also used in training large AI models such as Gemini – to unify and accelerate its autonomous driving training stack. This shared infrastructure enables Waymo to train more generalized and scalable models for diverse driving environments, reducing the time needed to expand into new cities.

Expected Innovations and Future Applications

Integrating Gemini into robotaxis represents a broader trend of bringing large multimodal AI models into everyday mobility services. If successfully deployed, the assistant could:

- Enhance the customer experience, making rides more interactive and accommodating.

- Provide contextual information and comfort, answering rider questions about surroundings or trip details.

- Support additional in-car features that improve convenience.

Although the timeline for any public rollout remains uncertain, Waymo’s exploration of Gemini integration signals a potential shift in how autonomous vehicles interact with passengers – moving from purely transport functions toward intelligent, AI-augmented user experiences.

In parallel, Waymo continues to expand its robotaxi operations in the U.S. and target international markets, with the company aiming for 1 million weekly rides and service in cities such as London and Tokyo by the end of 2026.