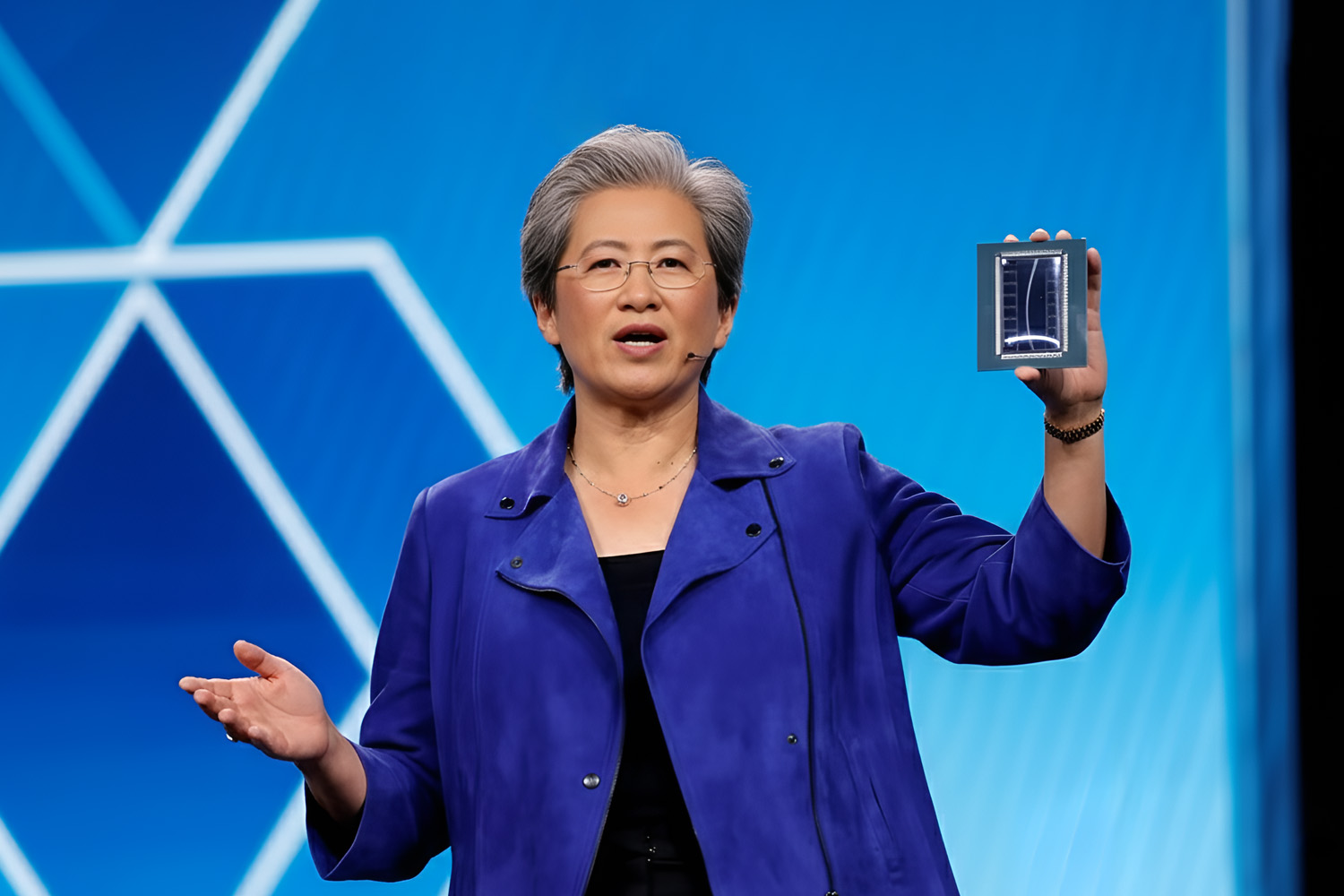

At CES 2026 in Las Vegas, Advanced Micro Devices (AMD) took center stage with a major unveiling of its latest artificial intelligence hardware, expanding its Instinct MI400-series GPUs to meet surging demand for AI compute. AMD CEO Dr. Lisa Su showcased the new MI455 and MI440X accelerators as part of the company’s broad AI infrastructure strategy, designed to support workloads from hyperscale data centers to enterprise deployments.

MI455: High-End AI Performance for Data Centers

The MI455 GPU represents AMD’s newest advancement in data-center-grade AI acceleration. Built on advanced process technology and leveraging the company’s CDNA architecture, this chip is aimed at large-scale AI training and inference workloads. It plays a central role in AMD’s Helios rack-scale platform, a modular solution intended to deliver industry-leading performance — up to multi-exaflops of AI compute within a single rack.

Helios, powered by MI455 GPUs and next-generation EPYC “Venice” CPUs, targets hyperscale AI clusters used to train trillion-parameter models and support massive inference demands from leading AI developers. AMD positions this architecture as a “blueprint” for future yotta-scale computing infrastructure, reflecting projections that global AI compute requirements may reach unprecedented levels in the coming years.

MI440X: Enterprise AI for On-Prem Deployments

Alongside the MI455, AMD introduced the MI440X accelerator — a versatile GPU tailored for enterprise and on-premises AI applications. Unlike hyperscale solutions that require specialized data center infrastructure, MI440X is designed to fit into compact eight-GPU systems, making it suitable for corporate and mid-size data centers that need scalable AI performance without extensive overhaul of existing hardware.

The MI440X expands the MI400 lineup by offering AI training, fine-tuning, and inference capabilities in a form factor intended to integrate with standard enterprise servers. This positions AMD to better serve customers seeking greater AI compute power while maintaining localized control of sensitive workloads.

Strategic Context and Market Impact

These announcements build on AMD’s broader AI computing vision — one that spans cloud, enterprise, and edge deployments. The company also previewed its upcoming MI500 Series GPUs, expected to launch in 2027 with dramatically higher performance, and highlighted ecosystem support through initiatives like the Ryzen AI 400 Series for consumer and workstation AI.

AMD’s expanded AI portfolio arrives amid intensifying competition with rivals such as NVIDIA, which continues to lead the AI accelerator market. However, AMD executives emphasized the importance of open platforms and modular designs that can scale across a wide range of applications.

Partnerships and Future Roadmaps

During the CES keynote, AMD reaffirmed collaborations with major AI developers — including OpenAI, which has publicly highlighted the need for advanced compute to support its generative AI models. These partnerships underscore AMD’s strategy to position its hardware at the core of next-generation AI infrastructure.

Looking ahead, AMD’s roadmap — from the MI400 series to future MI500 accelerators — signals a sustained investment in AI hardware that addresses both large-scale data centers and enterprise environments, supporting a broadening range of AI workloads across the tech industry.